Firecrawl is a web data API that converts websites into clean markdown or structured JSON for AI applications. It handles JavaScript rendering, proxy rotation, and anti-bot detection through its proprietary Fire-Engine technology, delivering broad web coverage without requiring users to manage scraping infrastructure. Pricing starts free with 500 credits and scales from $16 per month to $599 per month based on volume. For developers building RAG systems, AI agents, or any application that needs reliable web data, Firecrawl removes the hardest part of the data pipeline.

I've gone through many iterations of scraping with AI. BeautifulSoup scripts that break when a site changes layout. Scrapy pipelines that choke on JavaScript. Custom proxy setups that get banned within hours. Firecrawl replaces all of that with a single API call, and the thing I keep coming back to is simple: it just works.

I use it through the MCP server integration in Claude Code, which means my AI coding assistant can scrape, crawl, and search the web without me writing any scraping code. I used it to crawl Firecrawl's own website while researching this review. This is my honest take after months of real usage.

One thing worth knowing before you read further: almost every "Firecrawl review" currently ranking on Google is actually written by a competitor. Eesel.ai, Skyvern, Bright Data, CapSolver. They all publish reviews that happen to conclude you should use their tool instead. If you run a software company, this is actually a smart SEO tactic and I have no issue with it. But you should know what you're reading. This review has no competing product to push. I use Firecrawl because it works.

What Is Firecrawl?

Firecrawl is a web data API built for AI applications. You give it a URL (or a search query, or a natural language prompt), and it gives you back clean markdown or structured JSON. The kind of output you can feed directly into an LLM without spending hours cleaning HTML.

The company was founded by Caleb Peffer (CEO), Eric Ciarla (CMO), and Nicolas Camara (CTO). It started as an internal scraper at Mendable, their previous AI helpdesk startup used by Snapchat, Coinbase, and MongoDB. They kept hitting the same wall: getting clean web data for AI was unnecessarily hard. Every app rebuilt the same infrastructure differently. So they built it to solve the problem once.

The product emerged from Y Combinator S22 and raised a $14.5M Series A in August 2025 led by Nexus Venture Partners, with backing from Shopify CEO Tobias Lutke and Postman CEO Abhinav Asthana. Total funding sits at $16.2M. The open-source repo has over 87,000 GitHub stars and the company reports 350,000+ developers and 80,000+ companies using the platform.

What makes it different from traditional scraping tools: it's AI-native. The output is designed to be consumed by language models, not parsed by humans reading HTML. That sounds like marketing, but in practice the difference is real. The markdown output from a /scrape call is clean enough to drop directly into a RAG pipeline or chat context.

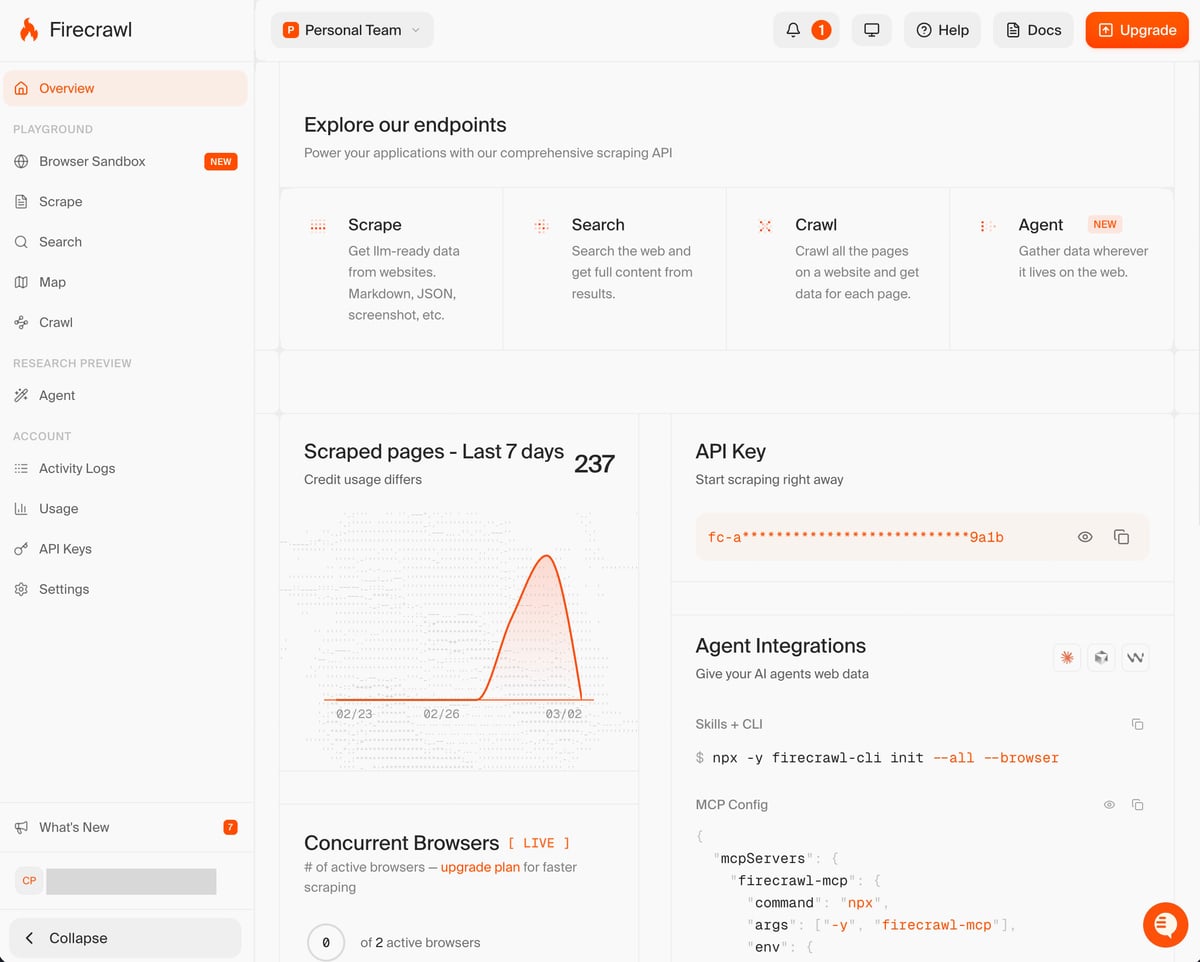

The team has also done a good job on the product side. The dashboard is clean, the docs are well-organized, and they've been active on social media shipping new features. They've lost a bit of momentum recently as the AI tooling space evolves faster than anyone can keep up with, but they seem like a smart company that keeps finding new solutions. The Browser Sandbox launch in February 2026 is a good example.

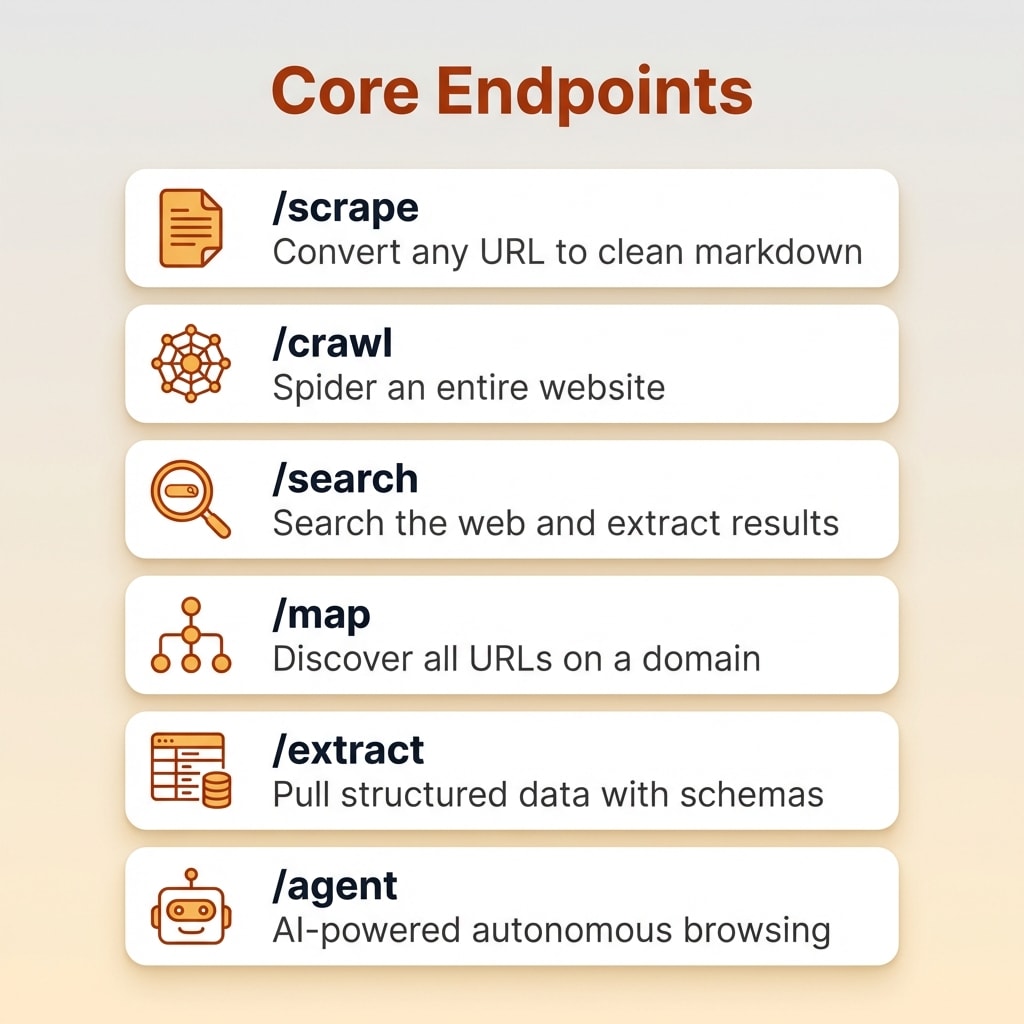

What You Get: Features and Endpoints

/scrape: Single Page to Markdown

The bread and butter. Give it a URL, get back clean markdown. It handles JavaScript rendering automatically through Fire-Engine, so single-page applications and dynamically-loaded content work without any extra configuration. You can also request HTML, screenshots, links lists, or structured JSON via Pydantic schemas. One credit per page.

When I need to verify a pricing page, check a competitor's feature list, or pull data from a specific URL, one API call returns clean markdown. No browser automation setup, no proxy configuration, no HTML parsing.

/crawl: Full Site Crawling

Submit a URL and the crawler spiders the entire site up to your configured limit. It's an async operation: you submit the job, poll for completion, and get paginated results. Respects robots.txt by default. One credit per page crawled.

I use this regularly to build product dossiers. Point it at a product website, set a page limit, and get back structured markdown for every page. When I crawled firecrawl.dev for this review, it collected the entire site in about three minutes. Crawl speed varies. Some sites complete in seconds, others take minutes for similar page counts. No clear pattern, but reliability is good.

/search: Web Search with Content Extraction

This one is underrated. It performs a Google-like search and scrapes the full content of each result. So instead of just getting titles and URLs like a regular search API, you get the actual page content ready to analyze. About 2 credits for 10 results.

/map: URL Discovery

Quickly enumerate all URLs on a domain without scraping content. Useful for scoping out how large a site is before committing credits to a full crawl. One credit per call.

/agent: AI-Powered Data Gathering

The /agent endpoint uses the FIRE-1 model to navigate complex websites autonomously. You give it a natural language prompt and it searches, clicks, navigates, and extracts data on its own. In January 2026, Parallel Agents (v2.8.0) launched for batching hundreds of queries simultaneously.

Honest assessment: /agent is still rough. It's in research preview and the results are inconsistent. Sometimes it returns exactly what you asked for. Other times it misses obvious information. At 10 credits per query (with up to 5 free per day during preview), it's worth experimenting with but not something I rely on yet.

Browser Sandbox (New, Feb 2026)

The newest feature gives agents a fully-managed remote browser environment. AI tools like Claude Code can launch a sandbox with Playwright pre-loaded to handle multi-step flows, login gates, form filling, and pagination. Sessions run in the cloud (2 credits per browser-minute) so nothing burdens your local machine.

The MCP Integration: Why I Actually Pay

This is the reason I use Firecrawl. I have the MCP server configured in Claude Code, which means my AI coding assistant can scrape, crawl, and search the web directly within my development environment. No switching tabs, no writing scraping scripts, no copy-pasting HTML.

The practical result: I tell Claude Code to crawl a product's website and it handles everything under the hood. The crawled data comes back as clean markdown. This review itself was built on data collected through that exact workflow.

For anyone using AI coding tools like Claude Code or Cursor, the MCP integration is the single most compelling reason to use this API. It turns your AI assistant into something that can actually see the live web.

Firecrawl Pricing: What It Actually Costs

Here's the full pricing breakdown.

| Plan | Price | Credits/Month | Concurrency | Cost Per Page |

|---|---|---|---|---|

| Free | $0 | 500 (one-time) | 2 | N/A |

| Hobby | $16/mo | 3,000 | 5 | ~$0.005 |

| Standard | $83/mo | 100,000 | 50 | ~$0.0008 |

| Growth | $333/mo | 500,000 | 100 | ~$0.0007 |

| Scale | $599/mo | 1,000,000 | 150 | ~$0.0006 |

| Enterprise | Custom | Custom | Custom | Bulk discounts |

Want to Hear From Me Weekly?

I'm putting together a weekly email with the real stuff. What's working, what's not, and the tools I'm actually using to grow. Not live yet, but you can grab a spot. Totally free!

The Credit System Explained

Most endpoints cost 1 credit per page (/scrape, /crawl, /map). But the advanced features cost more:

- /search: ~0.2 credits per result

- /agent (FIRE-1): 10 credits per query

- /browser (sandbox): 2 credits per browser-minute

- Failed requests: No charge (except

/agent, which uses credits even on failure)

My Honest Take on Pricing

I'm a hobby user. A typical month of content research uses 2,000-3,000 credits, which fits the Hobby plan at $16/month. A single site crawl might use a few hundred credits. Running 10 SERP searches costs about 20 credits. The per-unit economics are fine.

But here's what annoys me: credits don't roll over. If I use 500 credits one month and 5,000 the next, I've wasted the difference. My usage is bursty. I'll use Firecrawl heavily for a week while researching a product silo, then barely touch it for three weeks. A monthly subscription with use-it-or-lose-it credits is the wrong model for that pattern. I'd much prefer pay-as-you-go pricing.

The workaround is obvious: subscribe when you have a project, cancel when you don't. That's what I do. But it's friction that shouldn't exist.

The free tier (500 one-time credits) is fine for testing. You'll know within those 500 credits whether the output quality meets your needs. But it's one test run, not a trial period. Expect to hit the paywall within your first session.

At Scale, It Gets Expensive

If you're building software that relies on web scraping, the math changes. Crawling 50,000 pages per month puts you in Standard territory at $83/month. One Hacker News commenter called the pricing "egregiously expensive" for heavy use, and that's fair. At scale you need to factor Firecrawl into your margins. For a previous AI SEO project, I ran the numbers and the credit costs would have been a real line item. Beyond ~500,000 pages per month, compare with Apify or self-hosted solutions.

Is It Worth It vs. Building Your Own?

Yes, unless you enjoy managing proxy rotation, CAPTCHA solving, headless browser farms, and anti-bot detection. I've tried the DIY route with BeautifulSoup and Scrapy. It works for simple sites. The moment you hit JavaScript-heavy pages or anti-bot measures, you're in for weeks of infrastructure work. It works really well. It's just definitely not free.

Where Firecrawl Falls Short

No Rollover Credits

This is my biggest gripe. Unused credits expire at the end of each billing cycle. For bursty users who scrape heavily one week and not at all the next three, you're paying for capacity you don't use. The subscription model assumes steady monthly usage, which doesn't match how most developers actually work. Pay-as-you-go would be a better fit for the majority of users below the Standard tier.

Some Major Sites Are Blocked

Social media and paywalled platforms are explicitly excluded. Reddit, Twitter, LinkedIn. When I tried scraping a Reddit thread during SERP collection, it returned "Website Not Supported." The new Browser Sandbox with Playwright might help with some of these cases, but you'd be paying a premium (2 credits per minute) over setting up something locally with Playwright yourself. If your use case involves social media data, this is probably not the tool.

/agent Is Still Inconsistent

The FIRE-1 agent is promising but unreliable. Results vary significantly between runs on the same query. At 10 credits per attempt (and credits consumed even on failure), failed agent runs get expensive. Use it for experimentation, not production workflows. It will improve, but it's not there yet.

Self-Hosting Has Real Gaps

The open-source version sounds appealing until you realize it lacks Fire-Engine (the proxy/anti-bot system that makes the cloud service work). Self-hosting means bringing your own proxies, handling IP rotation yourself, and losing access to the /agent and /browser endpoints entirely. For most users, self-hosting creates more problems than it solves.

Crawl Speed Is Unpredictable

Some crawls complete in seconds. Others take minutes for similar-sized sites. There's no obvious pattern, and the documentation doesn't explain the variance. It's not a dealbreaker, but it makes it hard to predict how long a job will take.

Something New Is Coming...

A weekly breakdown of what's working across SaaS, ecommerce, and online marketing. The kind of stuff I'd tell a friend. Not launched yet, but you can grab a spot. Totally free!

Firecrawl vs. the Alternatives

I'll be honest: I haven't done deep side-by-side comparisons with every alternative. I use Firecrawl because it solved my problem and I haven't had a strong reason to switch. But here's what I know.

Crawl4AI: Free, open source, and AI-focused. Good for developers who want fine-grained control over their crawling behavior. The tradeoff: you manage your own infrastructure, and JavaScript rendering requires more setup. If you scrape infrequently and don't mind the setup time, Crawl4AI is worth considering. Firecrawl is better when you want clean output fast without managing anything.

Apify: A full-stack automation platform with 10,000+ pre-built scrapers ("Actors"). Much broader scope. I hear good things about Apify but I've never loved the interface. One of the things Firecrawl gets right is the user experience: clean dashboard, simple API, polished documentation. That matters when you're evaluating tools you'll use regularly. If you need a diverse ecosystem of scrapers or complex automation workflows, Apify is the more mature platform. At very large volumes, Apify's flexible pricing can win.

Beautiful Soup / Scrapy (DIY): Free but you manage everything. Fine for simple static sites. Painful the moment you encounter JavaScript rendering, CAPTCHAs, or anti-bot measures. I used Scrapy for years before switching. The time savings alone justify the cost.

Who Should Use Firecrawl

Great Fit

- AI developers building RAG or agent systems: If your application needs web data in a format LLMs can consume, this solves the hardest part of your data pipeline

- Developers using AI coding tools: The MCP integration with Claude Code and Cursor is a genuine workflow upgrade. Your AI assistant gets live web access.

- Content and SEO teams: Site crawling, SERP analysis, competitive research. The API automates the data collection that used to take hours of manual work

- Anyone who values time over money for scraping: If the alternative is building and maintaining your own scraping infrastructure, $16-83/month is trivial

Not a Great Fit

- Hobbyists who scrape occasionally: If you only need a few pages per week, Crawl4AI or even manual copy-paste might be sufficient

- Social media data needs: Reddit, Twitter, LinkedIn, and similar platforms are blocked. Look at platform-specific APIs instead

- Enterprises needing complex workflow automation: Apify's ecosystem of pre-built Actors is more suited to sophisticated multi-step automation pipelines

Frequently Asked Questions

Is Firecrawl free?

There's a free tier with 500 one-time credits, enough to scrape about 500 pages. After that, paid plans start at $16 per month. The free tier is enough to test the API but not enough for ongoing use.

Is Firecrawl open source?

Yes. The core is open source (AGPL-3.0) with 87,000+ GitHub stars. The self-hosted version lacks Fire-Engine, and the /agent and /browser endpoints are not available in self-host mode.

What is the Firecrawl MCP server?

It's an open-source Node service that connects the API to AI coding tools like Claude Code, Cursor, and VS Code. It lets AI assistants scrape and crawl the web directly within your development environment.

How does Firecrawl compare to Crawl4AI?

Crawl4AI is free with fine-grained control. Firecrawl offers managed infrastructure with automatic JavaScript rendering and anti-bot handling. Choose Crawl4AI for controlled, targeted crawls. Choose Firecrawl for fast, large-scale, AI-centric data gathering.

Can Firecrawl handle JavaScript-heavy websites?

Yes. Fire-Engine automatically renders JavaScript using headless browsers. The FIRE-1 agent can also click through UIs, fill forms, and solve simple CAPTCHAs for more complex sites.

The Bottom Line

Firecrawl works really well. The markdown output is clean, the API is simple, and the MCP integration with Claude Code is genuinely the best way to give your AI assistant live web access. It's a polished service that does exactly what it promises.

It's also definitely not free. The credit system is fair for moderate use but the lack of rollover punishes bursty users, and costs add up fast at scale. The /agent endpoint is promising but not production-ready. Some major sites (Reddit, LinkedIn) are blocked entirely. And the self-hosted version has real gaps compared to the cloud service.

For developers building AI applications that need web data, it removes weeks of infrastructure work. The time it saves is worth the cost. Just go in knowing what you're paying for.

Try Firecrawl free with 500 credits and see the output quality for yourself.